日本語 / English / Google Translate

Play Video: Techno Synesthesia: Four Seasons / Withering Tulips Twelve Tone Scale / 02:18 / 2018

Hide: Kenji Kojima's Biography

Kenji Kojima's Biography

I have been experimenting with the relationships between perception and cognition, technology, music and visual art since the early ’90s. I was born in Japan 1947 and moved to New York in 1980. I painted egg tempera paintings that were medieval art materials and techniques for the first 10 years in New York City. My paintings were collected by Citibank, Hess Oil, and others. A personal computer was improved rapidly during the '80s. I felt more comfortable with computer art than paintings. I switched my artwork to digital in the early '90s. My early digital works were archived in the New Museum - Rhizome, New York. I developed computer software "RGB MusicLab" in 2007 and created an interdisciplinary work exploring the relationship between images and music. I programmed the software “Luce” for the project “Techno Synesthesia” in 2014. My digital art series "Techno Synesthesia" has exhibited in New York, media art festivals worldwide, including Europe, Brazil, Asia, and the online exhibitions by ACM SIGGRAPH and FILE, etc. Anti-nuclear artwork “Composition FUKUSHIMA 2011” was collected in CTF Collective Trauma Film Collections / ArtvideoKoeln in 2015. LiveCode programmer. http://kenjikojima.com/

Hide: Kenji Kojima's Biography

Binary as an art material

Binaries can be processed directly by a computer.

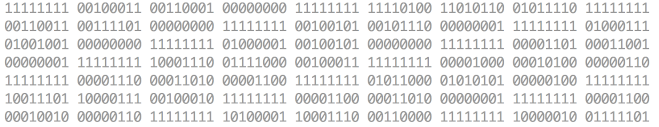

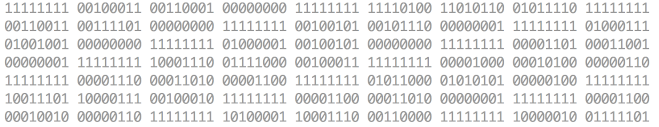

Binary notation is only "0" and "1" like the numbers in the above figure.

Table of contents: 日本語 (Japanese)

• Overview

• RGB Music

• Cipher Art

• Techno Synesthesia

• Work Concept

Overview:

This writing is about three projects that use binaries as an art material.

The project is an art that incorporates the ideas and methods of computer science. It is not intended for science. Binary operations require a logical stack. The logical and elegant construction has a certain inner beauty. But the accumulation of beauty does not prove anything or seek scientific results. The rips of meaninglessness in purposeless beauty may be the reason for art. The project uses the programming language "LiveCode".

Even before I started computer art, I was always interested in the materials that are the most basic construction of the painting. The idea that the smallest unit of data "binary" was used as an art material was influenced by the technique of medieval painters who mixed colored powder with mediume to make paints.

Because I feel a common passion with prehistoric cave artists who rubbed color earth powders onto a calcareous cave. Nowadays, "colors", which are a mixture of pigments and medium and packed in tubes, can be easily obtained at painting material stores without being aware of the raw materials and the process of making them. These colors determine the final form of the painting usually. If it is according to the purpose such as oil painting, watercolor painting, house paint, spray paint, etc., and meets the purpose, it is easy and convenient.

Similarly, most digital art visually adds variations of combinations that follow the superficial forms of past visual art, such as paintings, photographs, and movies, with prepared programming or short cut and, in some cases interactiveness, and completes the artwork. Interactiveness that appears to be new is also limited to information that does not confuse (or does confuse) the art connoisseurs. But in the future, digital art has the potential to cross over sensations, or create entirely new art forms that have never been seen before.

All computer data is made up of binaries. It is an important concept to be able to convert the same data into other forms. If the data collected for a certain purpose is converted to another format and output, it will appear to be a file in that output format. It is a decisive difference from the art that pursues illusion.

"RGB Music", which we will discuss at the beginning, converts the smallest unit pixel of an image into midi music format and outputs it. One-color pixel is made up of four binaries. To format the pixels into music, reduce them to binary, and convert them to midi files. Aside from the aesthetic sense of general music, the converted music is formally created of 12 scales of music. Files created for other purposes, in the same way, can be reduced to binary to create a completely different format file. Whether the file is useful or useless is a matter of value in a different dimension. We discuss two projects in the latter that use the technology of "RGB Music" and takes other approaches.

I'm working on converting images to music because of my interest in exploring the common elements of sight and hearing, but it could be possible to convert data from different sensations. Of course, even with images and music, you will find so many algorithms that differ from my method. However, assembling art from binaries also means that the artist self creates a development environment that realizes the idea of art. Digital art is still in its infancy and is now testing basic possibilities that are not bound by past methods. I have no idea in which direction we are heading. What I can say now that the idea from binary is not bound by past concepts, and I strongly feel the possibility of creating new art.

Another reason I stick to digital art is that I think it's an art method that is a little cleaner for the earth and allows me to think more toward the future than I use with other art materials. It is unbearable for artists that the artwork will become garbage that pollutes the earth 100 years later. Who can deny that possibility? We have to think about the quality of desire. The 21st-century artist requires ethics that are responsible for the future of this planet. That is stopping the expression of art using materials does not deny the artwork of the past. Art as a digital creative commons changes the quality of desire.

Additional statement 1:

Just a little after this writing in March 2021. One digital art was sold at an auction for about $ 70 million. It is a digital art that incorporates NFT (Non-Fungible Token), a method that can prove unique in the blockchain. I would like to write a little about this. First of all, the question is "Art must be only one"?. It would be meaningful from the art collections and the art market. I can't afford to write down the history of the collection here, so I would write a brief conclusion. I think that the meaning of art is only one. My conclusion is that NFT digital art is a new alternative to the value of money which has only been recorded in the book through the history of gold coins and banknotes and has nothing to do with the meaning of digital art. It is one of the movements of the money game. In addition, large amounts of power consumption are destructive to the environment. And in the near future, quantum computers will break the blockchain.

Additional statement 2: May 2021

I found a very interesting Japanese podcast interview that was talking about the book "Human History at the End of the Media: 'Philosophy and Mankind' by Yuichiro Okamoto". The podcast is in a total of 11 podcast series. Interviewer: Megumi Wakabayashi. The podcast vol.10 is particularly closely related I wrote "Since all computer data is composed of binaries, the important concept is that the same data can be converted to other forms."

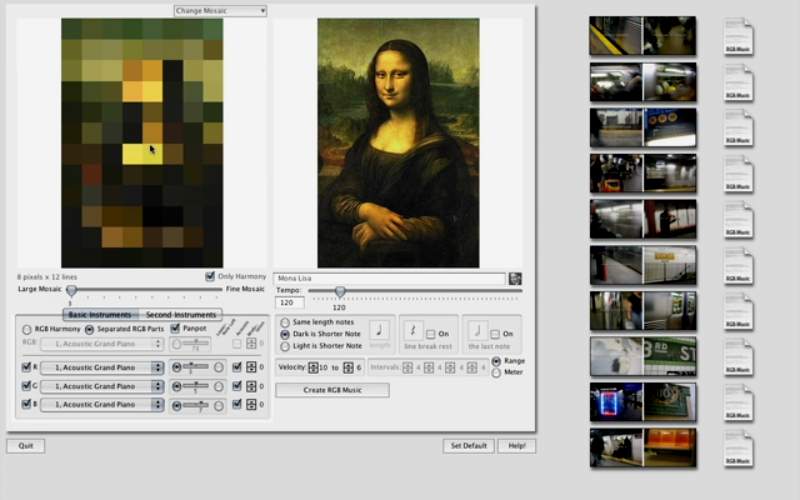

Since the image of a photograph uses innumerable pixels, first consider the image as a mosaic and each grid as its average color. In fine mosaics which many numbers of colors are converted to long music, and in rough mosaics which smaller numbers of colors are converted to short music. The converted notes are played on midi instruments. It is the outline of the "RGB Music".

You can download the application "RGB MusicLab" from here.

It doesn't work on macOS 11 Big Sur. Please use the old OS for the Mac version.

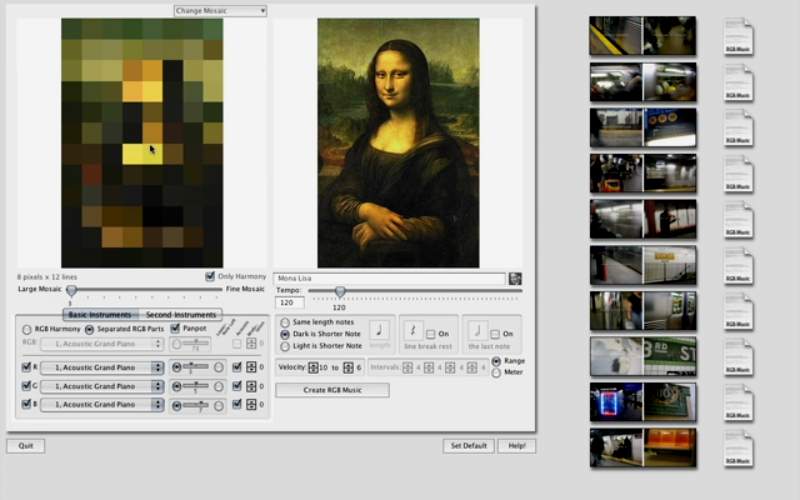

The below video shows the basic operation of the application "RGB MusicLab" and the file creation of the actual media art installation "Subway Synesthesia". "Subway Synesthesia" is an exhibition title given by the gallery director when it was exhibited at the non-profit art gallery "AC Institute" in New York City in 2008. The exhibition used a desktop application created for the exhibition, not a projection of a video file.

Play Video: Application "RGB MusicLab" & media art installation "Subway Synesthesia" / 06:36 / 2008

I tabulated the basic relationship between pixels and binaries in a usual computer image. At the top of the table: From the left, here is an image of only three pixels, "Red", "Green", and "Blue". The 2nd row of the table: One pixel consists of four binaries. "Alpha" at the beginning of the pixel is the transparency of the image, and the numbers are lined up as "Red", "Green", and "Blue". The three side-by-side pixel images contain 3 x 4 = 12 binaries. The 3rd row of the table: Numbered in the order in which the binaries are lined up. The 4th row of the table: Binaries are shown in decimal numbers from 0 to 255. The 5th row of the table: The above contents are written in binary. If the other color values of the four binaries in a pixel are zero, the largest number (255 in decimal) is the optical pure color of "Red", "Green", and "Blue". The "Alpha" 255 is opaque.

Let's proceed with various prototypes made with the application "RGB MusicLab".

In 2007 I made music from Kandinsky's painting that was the first RGB Music file. The reason was that Kandinsky thought about the relationship between abstract painting and music. Around this time, I used to move the file directly with a desktop application, so I didn't record it as a video. In 2021 I searched for this file and made it into a video. Application "RGB MusicLab" used the last version 41 of development. Maybe it was the last chance to keep a moving record.

Play Video:RGB Music: Wassily Kandinsky Composition VII / 04:20 / 2007

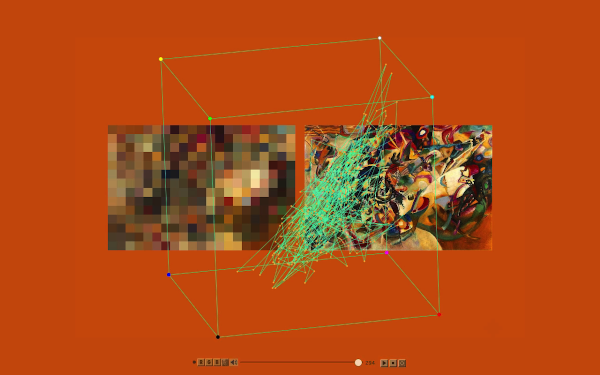

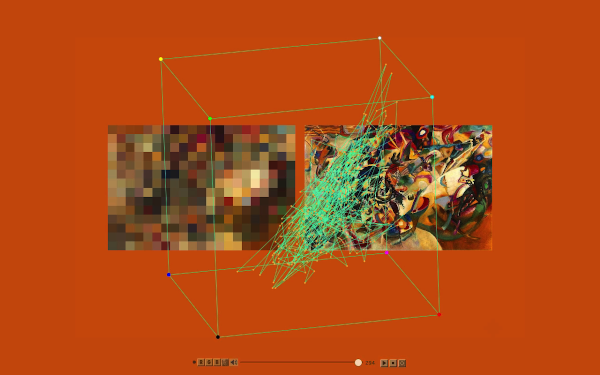

I created a piano piece from the RGB values of the Mona Lisa image of Leonardo da Vinci. The sound of the piano the program is playing, the target icon pointing while moving the mosaic on the image, and the background color are synchronized. The 3D wireframe in the center is an RGB color cube, and values from 0 to 255 are assigned to the XYZ axes, indicating where the scale you are currently playing is in the space of the color cube. The dots are connected by a line as the music progresses. The viewpoint of the cube can be changed with the mouse in the file created by the application "RGB MusicLab", but this is not a function because it is a video file.

Play Video: Leonardo da Vinci’s Mona Lisa Smile Variations Harmonic Minor / 04:02 / 2007

Received Pixelstorm Award "RGB into Music" Honorary Mention, Basel, Switzerland, 2009

There are 8 variations of studies on the website made by Mona Lisa image.

MonaLisa Smile Variations: Scale Studies.

The second Mona Lisa from the top moves the chromatic scale color to the corresponding scale to make it "Harmonic Minor Scale". Hearing can clearly tell the quality of sound, but normal human vision cannot tell the difference. The Mona Lisa below the others is muted by setting the color of the scale that does not correspond to "0". Since the QuickTime 7 midi instrument that was used in the browser when creating the original has been unavailable, this web is playing the JavaScript midi instrument. The sound quality is much lower.

Next is a simple color pattern. I assigned a different instrument to each RGB. The title was "Brown Diamonds in North Africa" because of the continuous pattern of brown diamonds and the feeling of the music. This is a color pattern, also it has the same function as a score.

Play Video: RGBMusic / Brown Diamond in North Africa / 3:11 / 2007

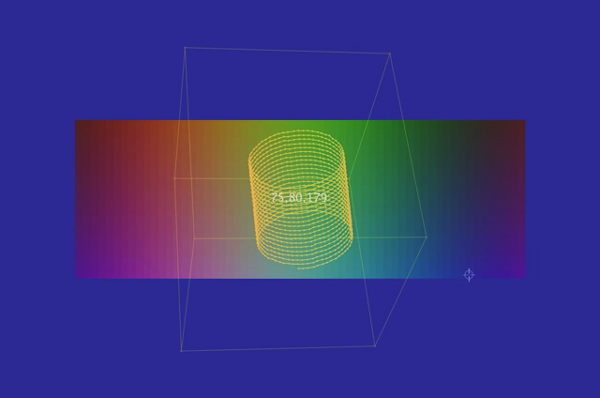

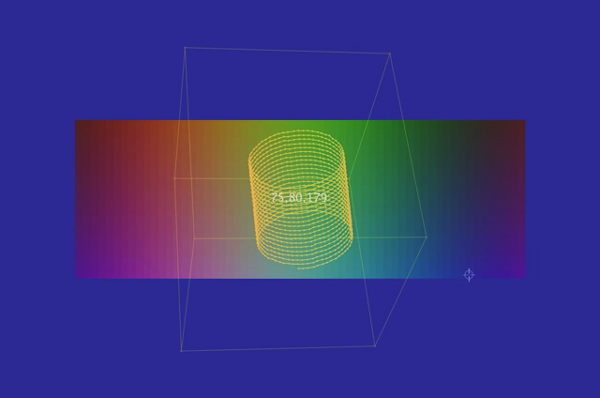

The idea from the RGB color cube, you can replace RGB values with XYZ values to create music from the coordinates of the surface of 3 dimensions. With the center of the sphere as the center of the RGB color cube, all points are at equal distances from the center. It's forming a unique harmony like classical music (I am not pursuing musical theory). The string trio instruments violin, viola, and cello are assigned to XYZ. The viewpoint of the RGB color cube is changed and rotated according to the speed of the melody. The background colors are the three notes being played.

Play Video: XYZ Music: String Trio Sphere Random Points / 05:12 / 2008

Sheet Music: Violin (X, Red) PDF, Viola (Y,Green) PDF, Cello (Z, Blue) PDF

"XYZ Music: Spiral Cylinder" visualizes the association between sound gradients, 2D plane colors, and spiral 3D cylinders drawn within RGB color cubes.

Play Video: XYZ Music: Spiral Cylinder / 02:36 / 2008

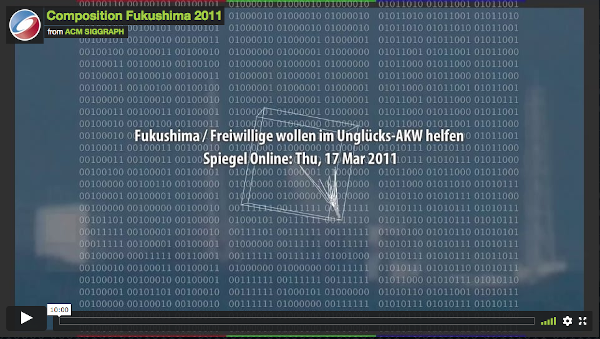

"Composition Fukushima 2011" is a project to incorporate news photos and their headlines related to the Fukushima nuclear accident on the Internet in March 2011 into an application developed for this purpose, convert the photo data into music, and display it in chronological order. The work was created on the day the news photo was posted on the news site. The original video is about an hour-long, but it was edited in the 10-minute for the ACM SIGGRAPH (CG Subcommittee of the American Computer Society) 2015 Online Exhibition. This 10-minute version of the work is archived in the Trauma Film Collection of ArtVideo Cologne, Germany.

Play Video: Composition FUKUSHIMA 2011 (on ACM SIGGRAPH site) / Digest 10:00

• Other RGB Music studies

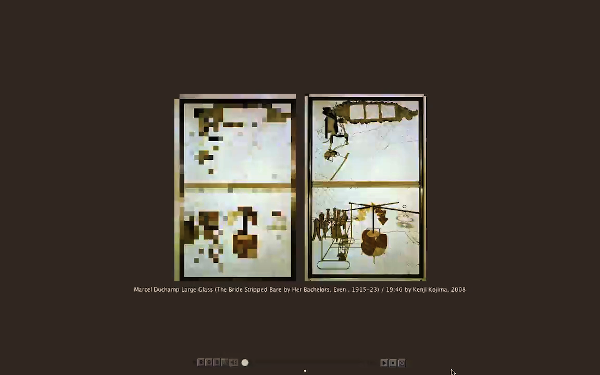

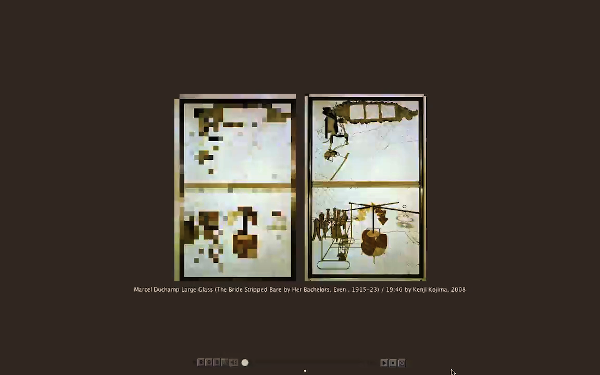

You may be interested in what kind of RGB music Marcel Duchamp's contemporary painting "Large Glass" contains in color. It takes about 20 minutes for the 2008 work.

Paly Video: Marcel Duchamp's The Bride Stripped Bare by Her Bachelors, Even The Large Glass

/ 20.09 / 2008

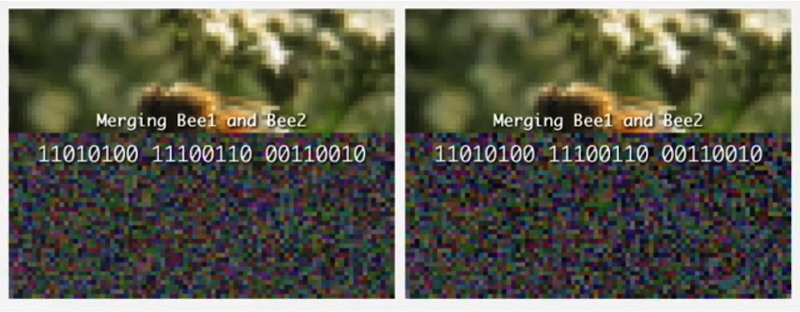

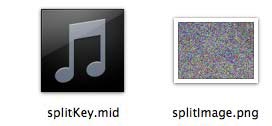

The example video below shows the process of decrypting an image from a mosaic of encrypted images and music created as a key to decrypting the image. Both the encrypted mosaic and the music of the encryption key are on the website of the author Kojima, and the decryption application MergeAudioVisual (works on macOS version 10.15 or lower) decrypts the original image while listening to the music of the encryption key. You can see the progress.

The video begins by extracting notes from the midi audio file. The extracted notes (symbols and numbers) are converted to binary. The same positions as the binary numbers in the visual data are logically operated one by one (Exclusive OR) to the numbers before encryption. It is decrypted back. The original number is the pixel value of the original photo.

Play Video: Audio and Visual files merged into an image. Bee 1380418994 / 11:28 / 2013

Encryption: The algorithm of the developed application "SplitAudioVisual" is a cryptographic creation method called one-time pad, which performs XOR operation (exclusive OR) for each bit of photo data and outputs data of the same length. The algorithm converts the two crypto files created from the photo into visual (color mosaic) and the other cryptographic key data into music (In this case, one is called crypto and another is key. But It's not the idea of a key, it's called for convenience).

Decryption: The project provides a player to play music while showing the visual progress to revert to the original photo (see video above). The creation of the cipher is the artist's own area (production), and the state shown by the player (decryption) is the artwork. The player downloads encrypted files from Kojima's server. You can watch the process of returning to the original image while listening to music. In other words, you can see artwork anywhere on the planet through the internet.

Free download: macOS version decryption app MergeAudioVisual_Mac06.zip (20140723)

It doesn't work on macOS Big Sur. It runs on V.10.15 or lower OS.

Play Video: German Unity Day / 02:00 / 2016 "Brave New World 2016 – Beyond the wall".

The above example shows how two different files merge into one German flag with the decryption of "Split / Merge Audio Visual". This work was exhibited at the 2016 "German Unity Day" exhibition "Beyond The Wall" in Berlin.

Play Video: Bee and Bird / 08:17 / 2014

Cryptographic and cryptographic key files do not necessarily have to be mosaic and audio files. The video above uses two audio (midi) files to make the original bee image.

The above "Split/Merge AudioVisual" mentioned used "one-time pad" to create CipherArt, but I also created another project "CipherTune" that used the algorithm "Blowfish". "CipherTune" was inspired by Alfred Hitchcock's movie "The Lady Vanishes". The story is about delivering the tune that an old lady put the secret message into melody during World War II to the intelligence department in London from the mountains of the Alps. Binary operations are performed with encryption, decryption, and music conversion, but here I will only introduce the video made in 2013. "CipherTune" was selected for the science digital festival "ESPACIO ENTER 2013" held in Tenerife, Spanish Canary Islands.

Play Video: CipherTune Concert / 06:10 / 2013

• Other Cipher Art studies

In the early days of project development, I was thinking of an installation that would convert video cam footage into live music with subtle changes in light.

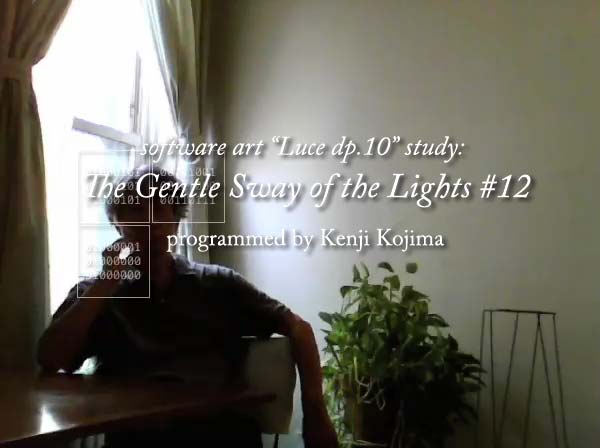

Left Video: Gentle Sway Study #12: Luce 10 Grand Piano / 05:44 / 2014

Right: Make a video cam an instrument Luce Dp.10

Where the data was collected are connected by lines to draw a two-dimensional drawing, and points with each acquisition time as the Z-axis are added. Finally, the three-dimensional wireframe is rotated. I still don't know the meaning of this drawing. I had a desire to draw something in the 4D time-space, so I'm trying it in the space of the video.

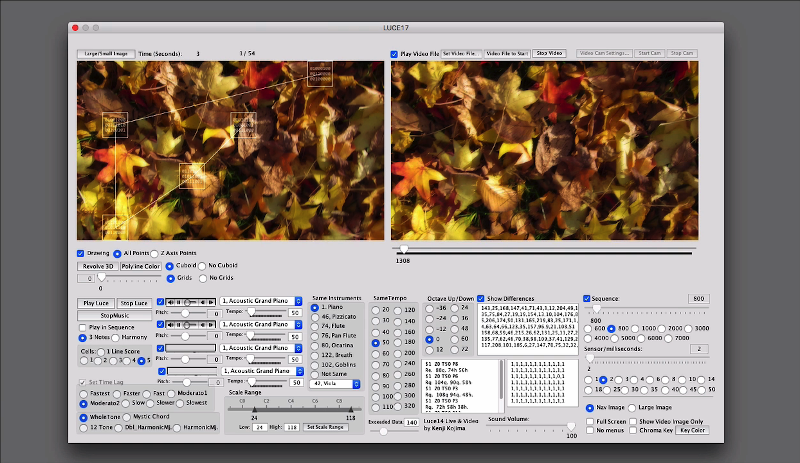

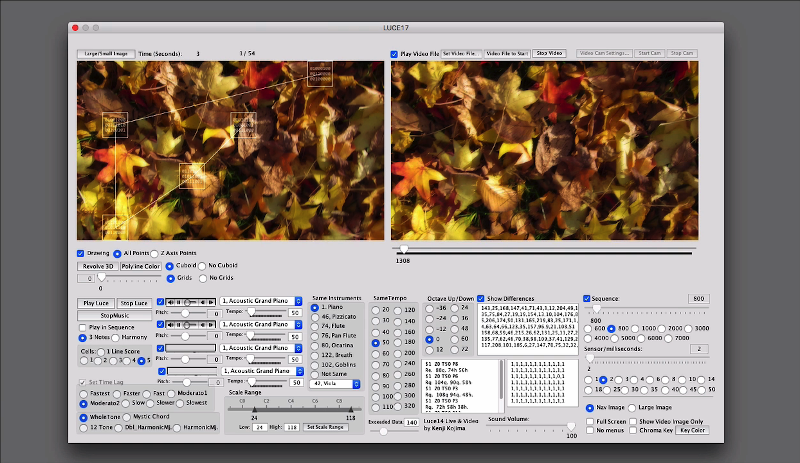

Play Video: Luce 17 Autumn Leaves Test / 01:00 / 2018

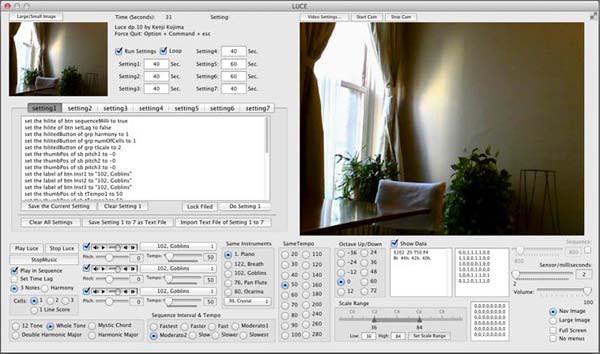

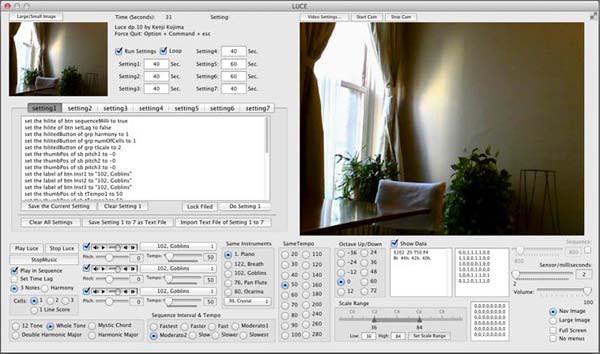

The artwork creation application "Luce" for this video doubles as a window for testing parameters and navigation. From this window (navigator), the final work will be moved on a second display to capture a full-screen video.

Although the final form of the work is made into a video. It does not show the so-called video art like story development or the visual illusion. The biggest reason for making a video is that the program I made will not work after a few years, so the motivation was to keep a record while the program was running from a certain time. Also, since that time, the quality of the video itself has improved. Therefore, although the work is a video, think of it as a capture of the progress of the algorithm.

Pre-stage researches started in 2011 which had undergone many trials and gradually changed its functions to the current interface. You can see the progress in 1 to 5 below. It shows a large number of prototypes.

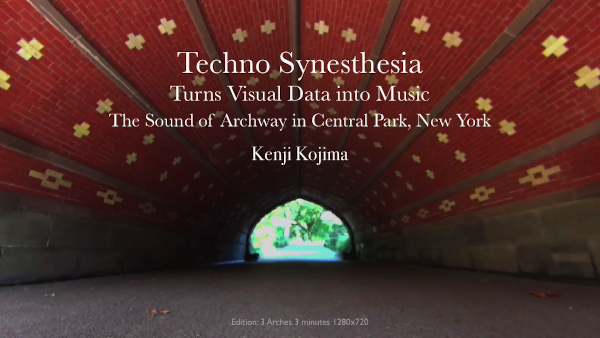

It would be too long to explain the whole process, so I chose to introduce videos that 3 works digest from "The Sound of Archway" produced in 2016, and 3 works from "Four Seasons / Ecosystem goes around on Spaceship Earth" produced in 2018. The 2016 series retained the afterimage at the moment the data was taken, but did not create a 3D wireframe from the timeline.

"The Sound of Archway" series participated in media art festivals in Greece, Romania, and Spain from 2016 to 2018. "Techno Synesthesia: Playmates Arch" is public archived in SUMULTAN MEDIA, Timișoara, Romania.

Play Video: Techno Synesthesia: The Sound of 3 Archways Digest / 03:00 / 2016

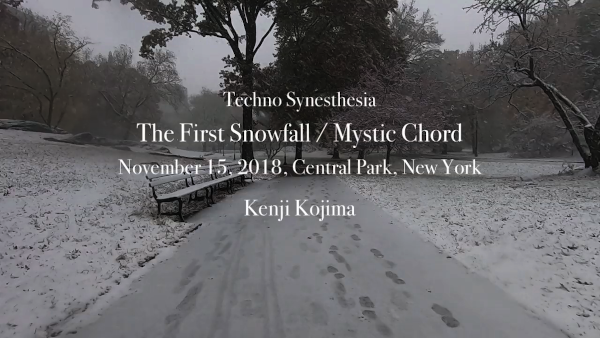

One of each work of the "Four Seasons" series participated in media art festivals in the United States, France, Spain, Indonesia, China, and Iran from 2018 to 2020, and all nine works in the series participated in the Web Biennale of the Istanbul Museum of Modern Art in Turkey. Also, three works were scheduled to participate in FILE 2020, which was scheduled to be held in Sao Paulo (Brazil) from June to August 2020, but the festival has been postponed indefinitely due to Covid-19 (Dec. 2020).

Play Video: Withering Tulips Mystic Chord 02:30 / 2018

On-line Catalog: The Rencontres Traverse Vidéo XIII p58-59 (French)

Play Video: The Spring Walk on Spaceship Earth 02:30 / 2018

11th Annual International Sustainability Short Film Competition - The University of North Carolina

Play Video: The First Snowfall, November 15, 2018 03:20 / 2018

Web Biennial APEIRON 2020, Istanbul Contemporary Art Museum, Istanbul, Turkey

Online Exhibition Website: 9 Works

Online Exhibition 2019 / Four Seasons / Ecosystem goes around on Spaceship Earth

The last work was created in response to a call from the Artvideo KOELN, Germany under the theme of the 2020 Corona Pandemic. Corona! Shut down? / Corona Film Collection 2020 / Number 46. Kenji Kojima

Play Video: Techno Synesthesia: Corona! Shutdown? Open Mailbox / 03:15 / 2020

The techno of "Techno Synesthesia" is computer technology. There are two contents of the technology. One is the composition method "algorithmic composition", and the other is "Sensor Device and Data" that detects and organizes the target data from a large amount of data acquired by a computer. "Sensor Device and Data" are technologies for manipulating binaries (which store and process information with 0 and 1). When replaced with humans, it is the function of the entrance of sensory organs and the processing organ of information (it can be called nerve cells). It will also include computer devices that reproduce visual and auditory data.

"Algorithmic Composition" is a method of composing data selected according to the purpose of always the same procedure (algorithm). "Algorithmic composition" is an old-fashioned composition method, not just computer composition.

"Synesthesia" is a perceptual phenomenon in which one sensation can be perceived by another. There are people in the world who have the perception that when you see a certain color, you hear a certain sound. It's vaguely understandable in thought, but unfortunately, it's a feeling that ordinary people like me don't have, so let's use contemporary computer technology instead of personal intuition to express it as an extension of art's thinking. That is "Techno Synesthesia." Since "Synesthesia" is not a scientifically proven event, I thought of it as a "Play of homo Ludens" using computer technology rather than a combination of science and art. It would be better.

"Cyborg" is the idea and technology of using science and technology to expand the human body and cognitive abilities and develop them as if they were biologically evolved. Probably there is a background to the idea of developing human beings as if they had evolved biologically. Specifically, with the rapid development of science and technology since the late 20th century. Cyborgs expand human physical abilities have become a reality, not science fiction. It is a new evolution that humankind which has risen from marine life to land for billions of years and has continued to undergo gradual physical changes for survival is rapidly advancing in the 21st century.

However, the art project "Techno Synesthesia" does not aim to extend the body like a cyborg or to expand the sight and hearing like telecommunications. It is not an expansion of the current human senses and abilities but is exploring the crossover of sensory organs with art using technology. Humans have five senses. In the process of evolution, we have slowly and slowly repeated generations to develop the five senses as a function to grasp the environment for survival. Humans use their eyes to grasp the shape and color of the outside world. How do creatures in the deep sea, who have no eyes, grasp the outside world? Or the skin may also play that role. Bats grasp the three-dimensional space with sound waves. We trust the information distributed by the five senses, so we may be ignoring the abilities of other sensory organs. Or science and technology may play a role in supplementing the senses, such as the expansion of physical fitness.

The Russian composer "Alexander Scriabin" wrote In the score of his symphony "Prometheus" in addition to the instruments used in the orchestra, the part "Luce (light in Italian)". It was the color of the light that was dictated based on a certain rule of musical notes In his system (the score part of the symphony "Prometheus"). It was difficult to play the Luce part in the actual performance of the age of Scriabin's time. However, recent technological developments have made it possible to play all symphony scores, including "Luce" (Symphony "Prometheus" Video Document & Performance Ale Symphony 2010).

Wassily Kandinsky created abstract an painting for the first time, was impressed by Scriabin's work, and was interested in synesthesia. He tried to incorporate it into his work. Kandinsky, the Blue Rider of the quarterly art magazine, said that in his paintings, yellow is the sound of C in the center of the trumpet, black is the end, etc., which is a connection between a specific sound and a specific color. In other words, instead of intuitively choosing a color each time, the production is based on a certain rule.

I hope that many attempts will be made to combine artistic sensations in the current computer-based data processing. There is now a turning point in the times as if a person who had been floated from the ground in the medieval painting could stand on the ground in the Early Renaissance.

Go to Page Top

January 2021, Kenji Kojima http://kenjikojima.com/ Email: index@kenjikojima.com

Play Video: Techno Synesthesia: Four Seasons / Withering Tulips Twelve Tone Scale / 02:18 / 2018

Hide: Kenji Kojima's Biography

Kenji Kojima's Biography

I have been experimenting with the relationships between perception and cognition, technology, music and visual art since the early ’90s. I was born in Japan 1947 and moved to New York in 1980. I painted egg tempera paintings that were medieval art materials and techniques for the first 10 years in New York City. My paintings were collected by Citibank, Hess Oil, and others. A personal computer was improved rapidly during the '80s. I felt more comfortable with computer art than paintings. I switched my artwork to digital in the early '90s. My early digital works were archived in the New Museum - Rhizome, New York. I developed computer software "RGB MusicLab" in 2007 and created an interdisciplinary work exploring the relationship between images and music. I programmed the software “Luce” for the project “Techno Synesthesia” in 2014. My digital art series "Techno Synesthesia" has exhibited in New York, media art festivals worldwide, including Europe, Brazil, Asia, and the online exhibitions by ACM SIGGRAPH and FILE, etc. Anti-nuclear artwork “Composition FUKUSHIMA 2011” was collected in CTF Collective Trauma Film Collections / ArtvideoKoeln in 2015. LiveCode programmer. http://kenjikojima.com/

Hide: Kenji Kojima's Biography

Binary as an art material

Kenji Kojima

Show: Kenji Kojima's Biography

Binaries can be processed directly by a computer.

Binary notation is only "0" and "1" like the numbers in the above figure.

Table of contents: 日本語 (Japanese)

• Overview

• RGB Music

• Cipher Art

• Techno Synesthesia

• Work Concept

Overview:

This writing is about three projects that use binaries as an art material.

- RGB Music (2007 - ) creates music from the pixel colors of a still image. The data are converted into music in binary and automatically composed.

- CiperArt (2013 - 2016) With RGB Music as the basic technology for music creation. Binary logic operations created an encrypted image and a music key from a photograph and visualized the decryption process.

- Techno Synesthesia (2014 - ) Create "RGB Music" from the time axis of the video. Then create a 3D wireframe by adding the acquisition time (Z) to the data 2D collected location (X, Y).

The project is an art that incorporates the ideas and methods of computer science. It is not intended for science. Binary operations require a logical stack. The logical and elegant construction has a certain inner beauty. But the accumulation of beauty does not prove anything or seek scientific results. The rips of meaninglessness in purposeless beauty may be the reason for art. The project uses the programming language "LiveCode".

Even before I started computer art, I was always interested in the materials that are the most basic construction of the painting. The idea that the smallest unit of data "binary" was used as an art material was influenced by the technique of medieval painters who mixed colored powder with mediume to make paints.

Because I feel a common passion with prehistoric cave artists who rubbed color earth powders onto a calcareous cave. Nowadays, "colors", which are a mixture of pigments and medium and packed in tubes, can be easily obtained at painting material stores without being aware of the raw materials and the process of making them. These colors determine the final form of the painting usually. If it is according to the purpose such as oil painting, watercolor painting, house paint, spray paint, etc., and meets the purpose, it is easy and convenient.

Similarly, most digital art visually adds variations of combinations that follow the superficial forms of past visual art, such as paintings, photographs, and movies, with prepared programming or short cut and, in some cases interactiveness, and completes the artwork. Interactiveness that appears to be new is also limited to information that does not confuse (or does confuse) the art connoisseurs. But in the future, digital art has the potential to cross over sensations, or create entirely new art forms that have never been seen before.

All computer data is made up of binaries. It is an important concept to be able to convert the same data into other forms. If the data collected for a certain purpose is converted to another format and output, it will appear to be a file in that output format. It is a decisive difference from the art that pursues illusion.

"RGB Music", which we will discuss at the beginning, converts the smallest unit pixel of an image into midi music format and outputs it. One-color pixel is made up of four binaries. To format the pixels into music, reduce them to binary, and convert them to midi files. Aside from the aesthetic sense of general music, the converted music is formally created of 12 scales of music. Files created for other purposes, in the same way, can be reduced to binary to create a completely different format file. Whether the file is useful or useless is a matter of value in a different dimension. We discuss two projects in the latter that use the technology of "RGB Music" and takes other approaches.

I'm working on converting images to music because of my interest in exploring the common elements of sight and hearing, but it could be possible to convert data from different sensations. Of course, even with images and music, you will find so many algorithms that differ from my method. However, assembling art from binaries also means that the artist self creates a development environment that realizes the idea of art. Digital art is still in its infancy and is now testing basic possibilities that are not bound by past methods. I have no idea in which direction we are heading. What I can say now that the idea from binary is not bound by past concepts, and I strongly feel the possibility of creating new art.

Another reason I stick to digital art is that I think it's an art method that is a little cleaner for the earth and allows me to think more toward the future than I use with other art materials. It is unbearable for artists that the artwork will become garbage that pollutes the earth 100 years later. Who can deny that possibility? We have to think about the quality of desire. The 21st-century artist requires ethics that are responsible for the future of this planet. That is stopping the expression of art using materials does not deny the artwork of the past. Art as a digital creative commons changes the quality of desire.

Additional statement 1:

Just a little after this writing in March 2021. One digital art was sold at an auction for about $ 70 million. It is a digital art that incorporates NFT (Non-Fungible Token), a method that can prove unique in the blockchain. I would like to write a little about this. First of all, the question is "Art must be only one"?. It would be meaningful from the art collections and the art market. I can't afford to write down the history of the collection here, so I would write a brief conclusion. I think that the meaning of art is only one. My conclusion is that NFT digital art is a new alternative to the value of money which has only been recorded in the book through the history of gold coins and banknotes and has nothing to do with the meaning of digital art. It is one of the movements of the money game. In addition, large amounts of power consumption are destructive to the environment. And in the near future, quantum computers will break the blockchain.

Additional statement 2: May 2021

I found a very interesting Japanese podcast interview that was talking about the book "Human History at the End of the Media: 'Philosophy and Mankind' by Yuichiro Okamoto". The podcast is in a total of 11 podcast series. Interviewer: Megumi Wakabayashi. The podcast vol.10 is particularly closely related I wrote "Since all computer data is composed of binaries, the important concept is that the same data can be converted to other forms."

RGB Music converts pixel data of an image to music

"RGB Music" is made by the computer application "RGB MusicLab" that I developed in 2007. The application "RGB MusicLab" reads the three color values (Red, Green, Blue) 0 to 255 of a pixel from the upper left to the lower right of the image and automatically converts them into 12-scale notes. In the basic form, you can change the scale through the adjustment filter. With the pixel value, 120 as the center C, it is automatically distributed to the upper and lower values. 0,0,0 is silent, and the length of the sound is determined by the numerical value. It is up to the creator to choose whether to make higher numbers are long notes or vice versa.Since the image of a photograph uses innumerable pixels, first consider the image as a mosaic and each grid as its average color. In fine mosaics which many numbers of colors are converted to long music, and in rough mosaics which smaller numbers of colors are converted to short music. The converted notes are played on midi instruments. It is the outline of the "RGB Music".

You can download the application "RGB MusicLab" from here.

It doesn't work on macOS 11 Big Sur. Please use the old OS for the Mac version.

The below video shows the basic operation of the application "RGB MusicLab" and the file creation of the actual media art installation "Subway Synesthesia". "Subway Synesthesia" is an exhibition title given by the gallery director when it was exhibited at the non-profit art gallery "AC Institute" in New York City in 2008. The exhibition used a desktop application created for the exhibition, not a projection of a video file.

Play Video: Application "RGB MusicLab" & media art installation "Subway Synesthesia" / 06:36 / 2008

I tabulated the basic relationship between pixels and binaries in a usual computer image. At the top of the table: From the left, here is an image of only three pixels, "Red", "Green", and "Blue". The 2nd row of the table: One pixel consists of four binaries. "Alpha" at the beginning of the pixel is the transparency of the image, and the numbers are lined up as "Red", "Green", and "Blue". The three side-by-side pixel images contain 3 x 4 = 12 binaries. The 3rd row of the table: Numbered in the order in which the binaries are lined up. The 4th row of the table: Binaries are shown in decimal numbers from 0 to 255. The 5th row of the table: The above contents are written in binary. If the other color values of the four binaries in a pixel are zero, the largest number (255 in decimal) is the optical pure color of "Red", "Green", and "Blue". The "Alpha" 255 is opaque.

| Red Pixel | Green Pixel | Blue Pixel | ||||||||||

| Alpha | Red | Green | Blue | Alpha | Red | Green | Blue | Alpha | Red | Green | Blue | |

| Number | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| Decimal | 255 | 255 | 0 | 0 | 255 | 0 | 255 | 0 | 255 | 0 | 0 | 255 |

| Binary | 11111111 | 11111111 | 00000000 | 00000000 | 11111111 | 00000000 | 11111111 | 00000000 | 11111111 | 00000000 | 00000000 | 11111111 |

Let's proceed with various prototypes made with the application "RGB MusicLab".

In 2007 I made music from Kandinsky's painting that was the first RGB Music file. The reason was that Kandinsky thought about the relationship between abstract painting and music. Around this time, I used to move the file directly with a desktop application, so I didn't record it as a video. In 2021 I searched for this file and made it into a video. Application "RGB MusicLab" used the last version 41 of development. Maybe it was the last chance to keep a moving record.

Play Video:RGB Music: Wassily Kandinsky Composition VII / 04:20 / 2007

I created a piano piece from the RGB values of the Mona Lisa image of Leonardo da Vinci. The sound of the piano the program is playing, the target icon pointing while moving the mosaic on the image, and the background color are synchronized. The 3D wireframe in the center is an RGB color cube, and values from 0 to 255 are assigned to the XYZ axes, indicating where the scale you are currently playing is in the space of the color cube. The dots are connected by a line as the music progresses. The viewpoint of the cube can be changed with the mouse in the file created by the application "RGB MusicLab", but this is not a function because it is a video file.

Play Video: Leonardo da Vinci’s Mona Lisa Smile Variations Harmonic Minor / 04:02 / 2007

Received Pixelstorm Award "RGB into Music" Honorary Mention, Basel, Switzerland, 2009

There are 8 variations of studies on the website made by Mona Lisa image.

MonaLisa Smile Variations: Scale Studies.

The second Mona Lisa from the top moves the chromatic scale color to the corresponding scale to make it "Harmonic Minor Scale". Hearing can clearly tell the quality of sound, but normal human vision cannot tell the difference. The Mona Lisa below the others is muted by setting the color of the scale that does not correspond to "0". Since the QuickTime 7 midi instrument that was used in the browser when creating the original has been unavailable, this web is playing the JavaScript midi instrument. The sound quality is much lower.

Next is a simple color pattern. I assigned a different instrument to each RGB. The title was "Brown Diamonds in North Africa" because of the continuous pattern of brown diamonds and the feeling of the music. This is a color pattern, also it has the same function as a score.

Play Video: RGBMusic / Brown Diamond in North Africa / 3:11 / 2007

The idea from the RGB color cube, you can replace RGB values with XYZ values to create music from the coordinates of the surface of 3 dimensions. With the center of the sphere as the center of the RGB color cube, all points are at equal distances from the center. It's forming a unique harmony like classical music (I am not pursuing musical theory). The string trio instruments violin, viola, and cello are assigned to XYZ. The viewpoint of the RGB color cube is changed and rotated according to the speed of the melody. The background colors are the three notes being played.

Play Video: XYZ Music: String Trio Sphere Random Points / 05:12 / 2008

Sheet Music: Violin (X, Red) PDF, Viola (Y,Green) PDF, Cello (Z, Blue) PDF

"XYZ Music: Spiral Cylinder" visualizes the association between sound gradients, 2D plane colors, and spiral 3D cylinders drawn within RGB color cubes.

Play Video: XYZ Music: Spiral Cylinder / 02:36 / 2008

"Composition Fukushima 2011" is a project to incorporate news photos and their headlines related to the Fukushima nuclear accident on the Internet in March 2011 into an application developed for this purpose, convert the photo data into music, and display it in chronological order. The work was created on the day the news photo was posted on the news site. The original video is about an hour-long, but it was edited in the 10-minute for the ACM SIGGRAPH (CG Subcommittee of the American Computer Society) 2015 Online Exhibition. This 10-minute version of the work is archived in the Trauma Film Collection of ArtVideo Cologne, Germany.

Play Video: Composition FUKUSHIMA 2011 (on ACM SIGGRAPH site) / Digest 10:00

• Other RGB Music studies

You may be interested in what kind of RGB music Marcel Duchamp's contemporary painting "Large Glass" contains in color. It takes about 20 minutes for the 2008 work.

Paly Video: Marcel Duchamp's The Bride Stripped Bare by Her Bachelors, Even The Large Glass

/ 20.09 / 2008

Cipher Art create cryptographic mosaic and music key

This project calculates the truth or false of each digit of a binary number. People whose image binary is 0, 1 think that looks the most art material like. Human senses divide the information surrounding the outside world according to the functions of the sensory organs and grasp it as the real world. The two typical pieces of information are sight and hearing. Generally classified into skin sensation, smell, and taste. I am thinking there is something in common between how to perceive the outside world and how to break the code.The example video below shows the process of decrypting an image from a mosaic of encrypted images and music created as a key to decrypting the image. Both the encrypted mosaic and the music of the encryption key are on the website of the author Kojima, and the decryption application MergeAudioVisual (works on macOS version 10.15 or lower) decrypts the original image while listening to the music of the encryption key. You can see the progress.

The video begins by extracting notes from the midi audio file. The extracted notes (symbols and numbers) are converted to binary. The same positions as the binary numbers in the visual data are logically operated one by one (Exclusive OR) to the numbers before encryption. It is decrypted back. The original number is the pixel value of the original photo.

Play Video: Audio and Visual files merged into an image. Bee 1380418994 / 11:28 / 2013

Encryption: The algorithm of the developed application "SplitAudioVisual" is a cryptographic creation method called one-time pad, which performs XOR operation (exclusive OR) for each bit of photo data and outputs data of the same length. The algorithm converts the two crypto files created from the photo into visual (color mosaic) and the other cryptographic key data into music (In this case, one is called crypto and another is key. But It's not the idea of a key, it's called for convenience).

Decryption: The project provides a player to play music while showing the visual progress to revert to the original photo (see video above). The creation of the cipher is the artist's own area (production), and the state shown by the player (decryption) is the artwork. The player downloads encrypted files from Kojima's server. You can watch the process of returning to the original image while listening to music. In other words, you can see artwork anywhere on the planet through the internet.

Free download: macOS version decryption app MergeAudioVisual_Mac06.zip (20140723)

It doesn't work on macOS Big Sur. It runs on V.10.15 or lower OS.

A little more detail: |

||

|

Split:

The software splits a digital image into audio and visual files by a bitwise XOR operation (logical exclusive OR). The bitwise operation takes two-bit patterns of equal length and performs exclusive OR on each pair of corresponding bits. The software makes random numbers. It is the same number of pixels as the original image. A binary of the random number performs on a binary of the image by bitwise XOR. Bitwise XOR makes 0 and 0 to 0, 0 and 1 to 1, 1 and 1 to 0. But the position of the decimal does not change. If a binary of one-pixel element is 10010011 and the random number is 00100001, the result will be 10110010. See the example of 8 bits binary. It is the same length as a color data element. 10010011 (original image) 00100001 (random number) 10110010 (the result) |

|

|

Audio and Visual: The software converted the random number data to musical notes by Kenji Kojima's RGB Music technology. RGB value 120 is converted to middle C. Each two-step values increase or decrease a halftone. One pixel makes three notes of harmony. 0,0,0 is no sounds. The note length is determined by the color value. The music is twelve-tone music. The left sample MIDI file was created from the musical notes of the random numbers. You can listen to it. The result of the image would be a new image processed by bitwise XOR. The perception of human beings could not recognize what the image was. We have 2 digital files as audio and visual. You can think that the audio is a key and the visual is a cipher image.

|

|

|

Merge: The software converts an audio key (music file) to musical notes then makes binary. The binary performs on the binary of cipher image (visual) by bitwise XOR. See the example. 10110010(split image) 00100001(split audio key)

10010011 (same binary of the original)

|

|

Logical operation note (Outline of logical operation of LiveCode programming) |

||

Play Video: German Unity Day / 02:00 / 2016 "Brave New World 2016 – Beyond the wall".

The above example shows how two different files merge into one German flag with the decryption of "Split / Merge Audio Visual". This work was exhibited at the 2016 "German Unity Day" exhibition "Beyond The Wall" in Berlin.

Play Video: Bee and Bird / 08:17 / 2014

Cryptographic and cryptographic key files do not necessarily have to be mosaic and audio files. The video above uses two audio (midi) files to make the original bee image.

The above "Split/Merge AudioVisual" mentioned used "one-time pad" to create CipherArt, but I also created another project "CipherTune" that used the algorithm "Blowfish". "CipherTune" was inspired by Alfred Hitchcock's movie "The Lady Vanishes". The story is about delivering the tune that an old lady put the secret message into melody during World War II to the intelligence department in London from the mountains of the Alps. Binary operations are performed with encryption, decryption, and music conversion, but here I will only introduce the video made in 2013. "CipherTune" was selected for the science digital festival "ESPACIO ENTER 2013" held in Tenerife, Spanish Canary Islands.

Play Video: CipherTune Concert / 06:10 / 2013

• Other Cipher Art studies

Techno Synesthesia extract music from video images

The video expresses motion with a series of still-frame images, a huge amount of data is used even for a short video time. Reading a frame of pixels from left to bottom right, like RGB Music, would make a single short video music length of months instead of hundreds of hours. To extract an appropriate amount of data from the time axis, the algorithm divides the frame into 48 (later 84) grids, acquires the color data of the top 5 places with a large difference in brightness at regular intervals.In the early days of project development, I was thinking of an installation that would convert video cam footage into live music with subtle changes in light.

Left Video: Gentle Sway Study #12: Luce 10 Grand Piano / 05:44 / 2014

Right: Make a video cam an instrument Luce Dp.10

Where the data was collected are connected by lines to draw a two-dimensional drawing, and points with each acquisition time as the Z-axis are added. Finally, the three-dimensional wireframe is rotated. I still don't know the meaning of this drawing. I had a desire to draw something in the 4D time-space, so I'm trying it in the space of the video.

Play Video: Luce 17 Autumn Leaves Test / 01:00 / 2018

The artwork creation application "Luce" for this video doubles as a window for testing parameters and navigation. From this window (navigator), the final work will be moved on a second display to capture a full-screen video.

Although the final form of the work is made into a video. It does not show the so-called video art like story development or the visual illusion. The biggest reason for making a video is that the program I made will not work after a few years, so the motivation was to keep a record while the program was running from a certain time. Also, since that time, the quality of the video itself has improved. Therefore, although the work is a video, think of it as a capture of the progress of the algorithm.

Pre-stage researches started in 2011 which had undergone many trials and gradually changed its functions to the current interface. You can see the progress in 1 to 5 below. It shows a large number of prototypes.

- 2011-2017: Subway, Street, Others and Pre-Project Studies

- 2015-2017: Archway in Central Park, Fountain in New York City

- 2017-2018: Timeline Drawing & Withering Flowers

- 2019-2020: Excavation of music in black & white silent films of the Lumière brothers

- 2015-2016: Media Performance Noh [I-MY] Dual Noh Dancing of Vision and Reality

It would be too long to explain the whole process, so I chose to introduce videos that 3 works digest from "The Sound of Archway" produced in 2016, and 3 works from "Four Seasons / Ecosystem goes around on Spaceship Earth" produced in 2018. The 2016 series retained the afterimage at the moment the data was taken, but did not create a 3D wireframe from the timeline.

"The Sound of Archway" series participated in media art festivals in Greece, Romania, and Spain from 2016 to 2018. "Techno Synesthesia: Playmates Arch" is public archived in SUMULTAN MEDIA, Timișoara, Romania.

Play Video: Techno Synesthesia: The Sound of 3 Archways Digest / 03:00 / 2016

One of each work of the "Four Seasons" series participated in media art festivals in the United States, France, Spain, Indonesia, China, and Iran from 2018 to 2020, and all nine works in the series participated in the Web Biennale of the Istanbul Museum of Modern Art in Turkey. Also, three works were scheduled to participate in FILE 2020, which was scheduled to be held in Sao Paulo (Brazil) from June to August 2020, but the festival has been postponed indefinitely due to Covid-19 (Dec. 2020).

Play Video: Withering Tulips Mystic Chord 02:30 / 2018

On-line Catalog: The Rencontres Traverse Vidéo XIII p58-59 (French)

Play Video: The Spring Walk on Spaceship Earth 02:30 / 2018

11th Annual International Sustainability Short Film Competition - The University of North Carolina

Play Video: The First Snowfall, November 15, 2018 03:20 / 2018

Web Biennial APEIRON 2020, Istanbul Contemporary Art Museum, Istanbul, Turkey

Online Exhibition Website: 9 Works

Online Exhibition 2019 / Four Seasons / Ecosystem goes around on Spaceship Earth

The last work was created in response to a call from the Artvideo KOELN, Germany under the theme of the 2020 Corona Pandemic. Corona! Shut down? / Corona Film Collection 2020 / Number 46. Kenji Kojima

Play Video: Techno Synesthesia: Corona! Shutdown? Open Mailbox / 03:15 / 2020

Work Concept Techno Synesthesia

I have written the technical side. Since technology is to support the concept of the work, I will finish by writing from the aspect of the concept. The project "Techno Synesthesia" started in 2014, but the starting point of the concept is the development of "RGB MusicLab" in 2007.The techno of "Techno Synesthesia" is computer technology. There are two contents of the technology. One is the composition method "algorithmic composition", and the other is "Sensor Device and Data" that detects and organizes the target data from a large amount of data acquired by a computer. "Sensor Device and Data" are technologies for manipulating binaries (which store and process information with 0 and 1). When replaced with humans, it is the function of the entrance of sensory organs and the processing organ of information (it can be called nerve cells). It will also include computer devices that reproduce visual and auditory data.

"Algorithmic Composition" is a method of composing data selected according to the purpose of always the same procedure (algorithm). "Algorithmic composition" is an old-fashioned composition method, not just computer composition.

"Synesthesia" is a perceptual phenomenon in which one sensation can be perceived by another. There are people in the world who have the perception that when you see a certain color, you hear a certain sound. It's vaguely understandable in thought, but unfortunately, it's a feeling that ordinary people like me don't have, so let's use contemporary computer technology instead of personal intuition to express it as an extension of art's thinking. That is "Techno Synesthesia." Since "Synesthesia" is not a scientifically proven event, I thought of it as a "Play of homo Ludens" using computer technology rather than a combination of science and art. It would be better.

"Cyborg" is the idea and technology of using science and technology to expand the human body and cognitive abilities and develop them as if they were biologically evolved. Probably there is a background to the idea of developing human beings as if they had evolved biologically. Specifically, with the rapid development of science and technology since the late 20th century. Cyborgs expand human physical abilities have become a reality, not science fiction. It is a new evolution that humankind which has risen from marine life to land for billions of years and has continued to undergo gradual physical changes for survival is rapidly advancing in the 21st century.

However, the art project "Techno Synesthesia" does not aim to extend the body like a cyborg or to expand the sight and hearing like telecommunications. It is not an expansion of the current human senses and abilities but is exploring the crossover of sensory organs with art using technology. Humans have five senses. In the process of evolution, we have slowly and slowly repeated generations to develop the five senses as a function to grasp the environment for survival. Humans use their eyes to grasp the shape and color of the outside world. How do creatures in the deep sea, who have no eyes, grasp the outside world? Or the skin may also play that role. Bats grasp the three-dimensional space with sound waves. We trust the information distributed by the five senses, so we may be ignoring the abilities of other sensory organs. Or science and technology may play a role in supplementing the senses, such as the expansion of physical fitness.

The Russian composer "Alexander Scriabin" wrote In the score of his symphony "Prometheus" in addition to the instruments used in the orchestra, the part "Luce (light in Italian)". It was the color of the light that was dictated based on a certain rule of musical notes In his system (the score part of the symphony "Prometheus"). It was difficult to play the Luce part in the actual performance of the age of Scriabin's time. However, recent technological developments have made it possible to play all symphony scores, including "Luce" (Symphony "Prometheus" Video Document & Performance Ale Symphony 2010).

Wassily Kandinsky created abstract an painting for the first time, was impressed by Scriabin's work, and was interested in synesthesia. He tried to incorporate it into his work. Kandinsky, the Blue Rider of the quarterly art magazine, said that in his paintings, yellow is the sound of C in the center of the trumpet, black is the end, etc., which is a connection between a specific sound and a specific color. In other words, instead of intuitively choosing a color each time, the production is based on a certain rule.

I hope that many attempts will be made to combine artistic sensations in the current computer-based data processing. There is now a turning point in the times as if a person who had been floated from the ground in the medieval painting could stand on the ground in the Early Renaissance.

Go to Page Top

January 2021, Kenji Kojima http://kenjikojima.com/ Email: index@kenjikojima.com

日本語 Japanese / 英語 English

多语种翻译 Google Translate

Kenji Kojima

Show/Hide: Kenji Kojima's Biography

Please Support Kenji Kojima's Artworks

index@kenjikojima.com

日本からのドネーションはこちら

Attribution-NonCommercial-NoDerivatives

You Can Download Kenji Kojima's Online

Edition Videos (Public 1280x720) under

Creative Commons License. You may not

use the material for commercial purposes.

Download PDF

Binary as an art material